The 1xers Guide to LLM, ChatGpt & AI

Alt Title: LLM vs ChatGpt vs HuggingFace vs Llama vs Other Fancy AI Terms You may have heard but had no idea what they meant

I struggled to understand what all these AI terms meant: LLMs, Llamas (not the animal from Peru!). Though I had used ChatGpt, I wasn't aware of all the intricacies. Why did everyone keep linking to HuggingFace, and what was the big deal about Ollama? How is that different from Llama v2?

Since I had some time over the Christmas holidays, I spent some time playing with these to get what all these terms mean.

This article is meant for end users (mainly engineers) who like me are confused with how the whole new AI world works. I'll try to explain what the terms mean and how you can get started in AI (or move beyond using the web version of ChatGPT), including how to run LLMs locally (if you don't know what that means, keep reading!). If nothing else, you can appear smart in conversations.

The field is moving ultrafast (even when you take into consideration that software moves fast in general). AI companies make traditional software look like steel manufacturers. But if you know the fundamentals, it's easier to keep up to date with what's happening.

In this post, I will go over what LLMs are, and how to run them locally.

What is a LLM (Large Language Model)

The core of ChatGpt etc is a LLM, which is a computer program that can understand, interpret, generate and respond to human languages. The key it can not only understand and interpret, but it can respond in a somewhat intelligent way, and can even generate text (like when you ask it to write a computer program).

The best explanation of how LLMs work is this one by Stephen Wolfram https://writings.stephenwolfram.com/2023/02/what-is-chatgpt-doing-and-why-does-it-work/

I will try to summarise it here:

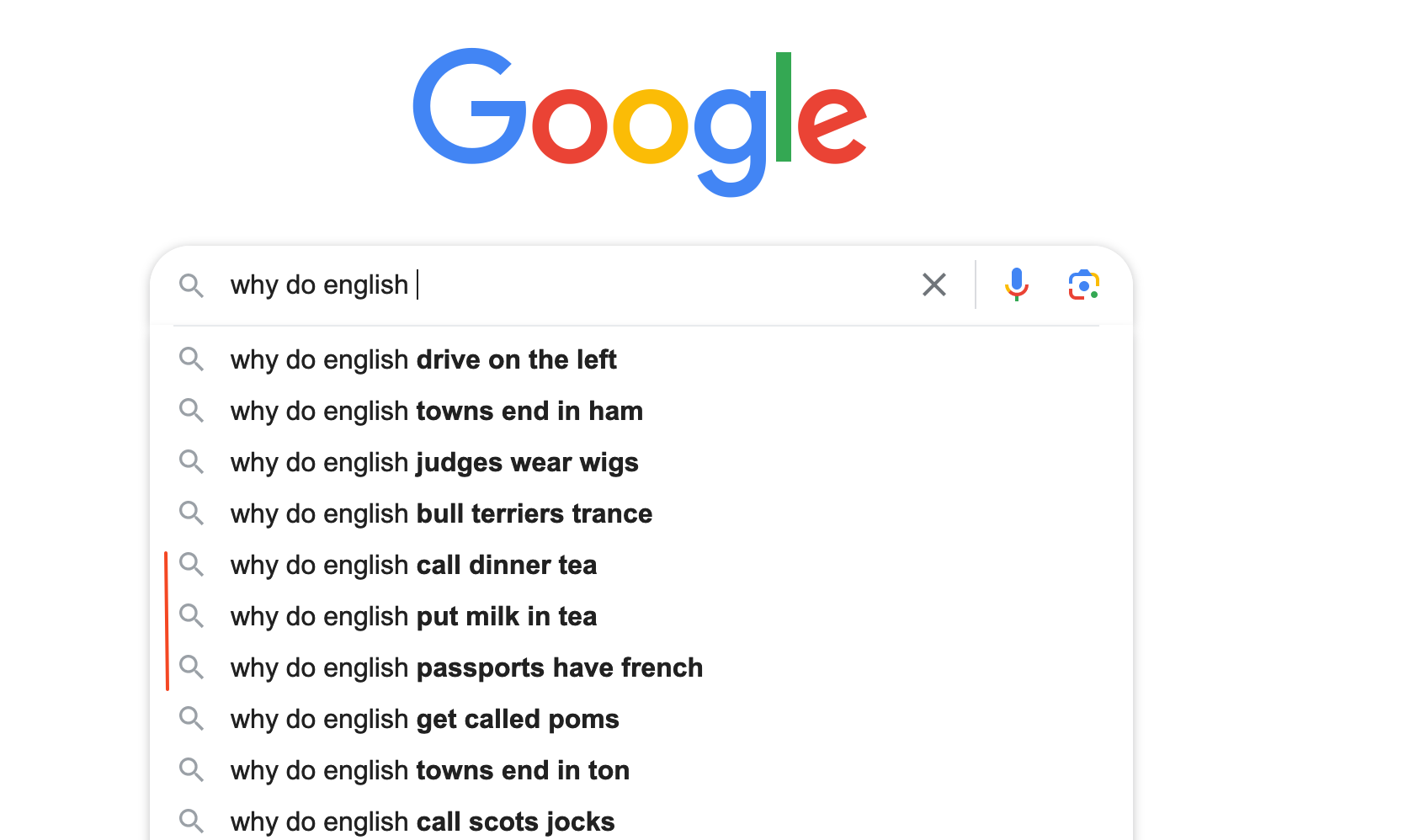

Remember Google tries to "guess" what you are searching for:

What sort of a heathen on Google drinks tea without milk?

A LLM is like a supercharged version of Google's autocomplete. It can not only "guess" the next words, but reply/generate text in a way that sounds intelligent.

How? Because these LLMs have been trained on Terrabytes and terabytes of data. For example, one LLM Mistral-7B-Instruct has 7 billion parameters (that's what the 7b stands for). So every time you chat to a 7B model, that's how many parameters it uses to answer your question (which is why a 7B model needs at least 8GB RAM).

And that is the simplest model. More complex models use more. As of writing this, Meta's LLama2 has 70 billion parameters. Don't know how much RAM I need for that!

Commercial LLMs

There are a few commercial LLMs, though I haven't used many. Claude 2 got some publicity at one time though it seems to have gone quiet. Google had their famous "live" "demo" which was found to be faked.

ChatGPT is the best GPT out there (as of writing, remember how fast the field moves).

ChatGpt 4 is the most advanced version yet. It gives complex and detailed answers. Note, that this version is only available to paid users, so if you are a free user, you can only use 3.5

ChatGPT 3.5 is good enough for most cases and I prefer it for programming questions, as ChatGpt 4 has a 20 questions every 3 hours limit (and I find it just freezes after that).

But have no doubts, ChatGPT 4 is really good and miles ahead of the competition. If you are writing an essay, ChatGPT4 really shines. I found this when I was doing research for my blog on Toxic positivity (this is my other blog, focussed mainly on meditation), and couldn't figure out how toxic positivity was different from healthy optimism. I read the top 2 pages of Google results, and I looked at dozens of Reddit/Twitter posts, but I couldn't find a satisfactory answer to my question.

Until I asked ChatGpt v4, and it gave me the best answer– much better than any blog or article I'd read. If you are interested in this topic, click here to read more.

Open Source LLMs

For a long time, ChatGPT and other commercial LLMs were all there were. But in the last year, open source LLMs are catching up.

There are many places to see which LLM is the best, but HuggingFace has a list of all the top ones. Not all of them are open source, but a large majority are.

There are a few free LLMs that generated big hype. Chief amongst them was LLama 2 from Facebook (no I'm not calling them Meta). More so since the latest version can be used for free even for commercial tools (within limits, but the limits are very generous).

So which model should you use? If you are just playing around, choose one of the most popular ones. These will be the default values when you run a LLM locally (see below).

Now the most important thing: How do you run these models locally on your machine?

Running LLMs locally on your own machine

HugginFace has a way to run the models locally but I found it overly complicated. It's geared more towards AI researchers.

If you just want to play around (and I suggest you do! You might not need a ChatGPT subscription if the models keep improving), there are 2 easy ways:

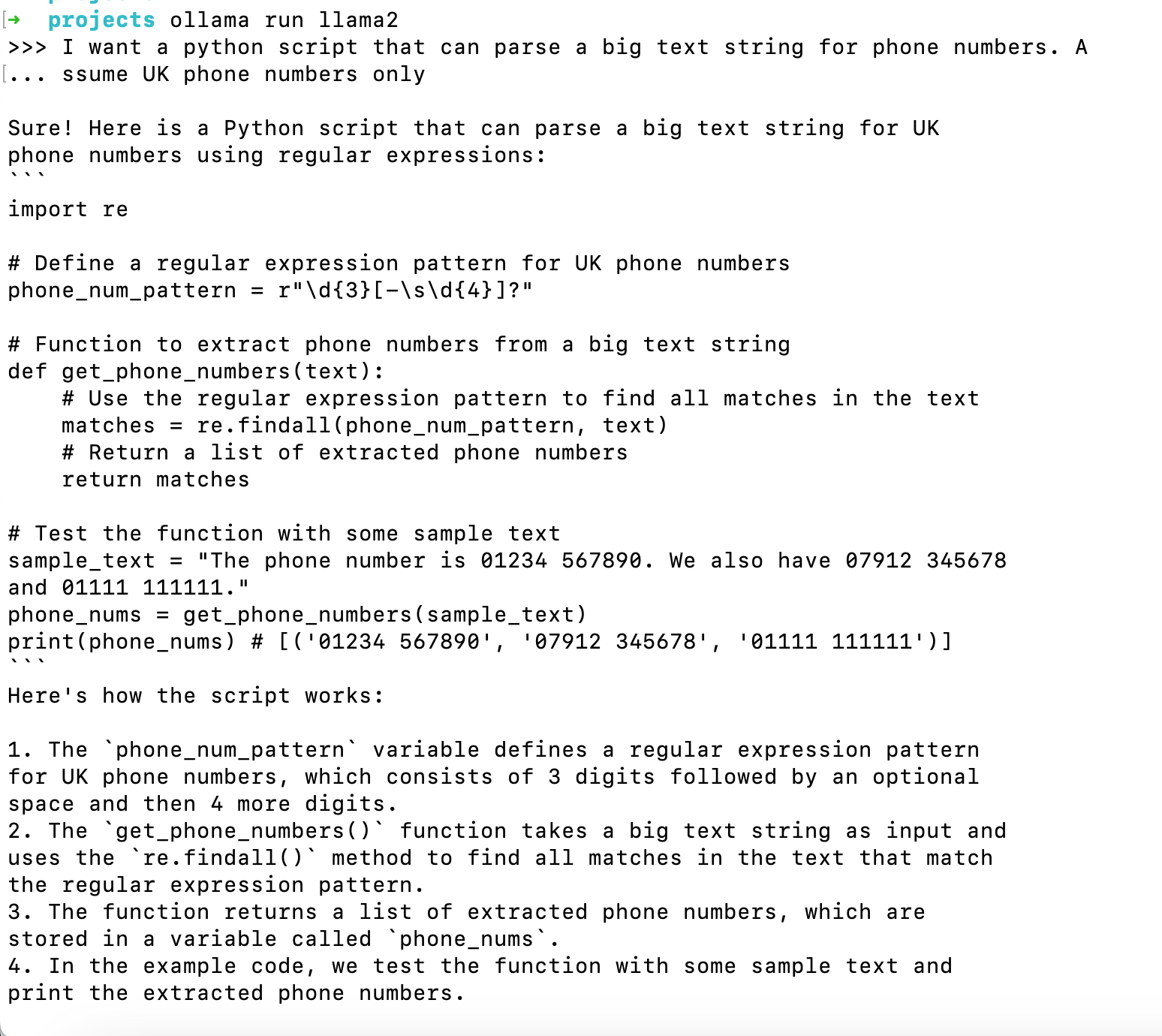

1 Ollama https://ollama.ai/ Though currently only for Mac and Linux, on Windows you can run it via WSL

I found Ollama the easiest to run– you literally download one file and you can run a Local LLM (Llama 2 is the default one).

Of course, you need a beefy machine– my Mac is 4 years old and has 8GB RAM (the minimum required), but of course, I have programs running, so I don't get the full 8GB. Which is fine– the LLM is just a little slow. But fine for playing with.

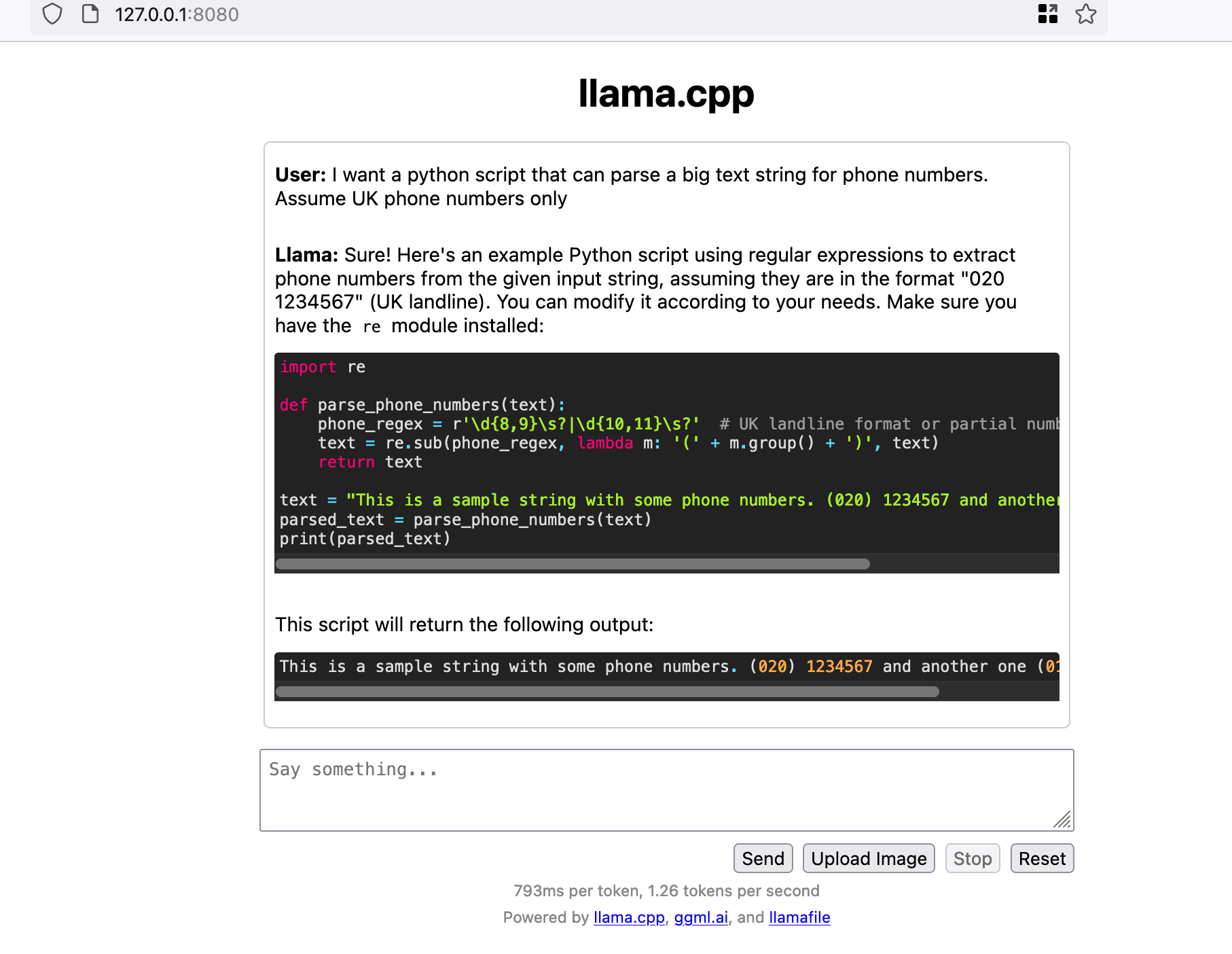

Here I asked it to generate some Python code:

This took 10+ minutes to generate, but my laptop is 4+ years old and these beasts use a lot of CPU/RAM.

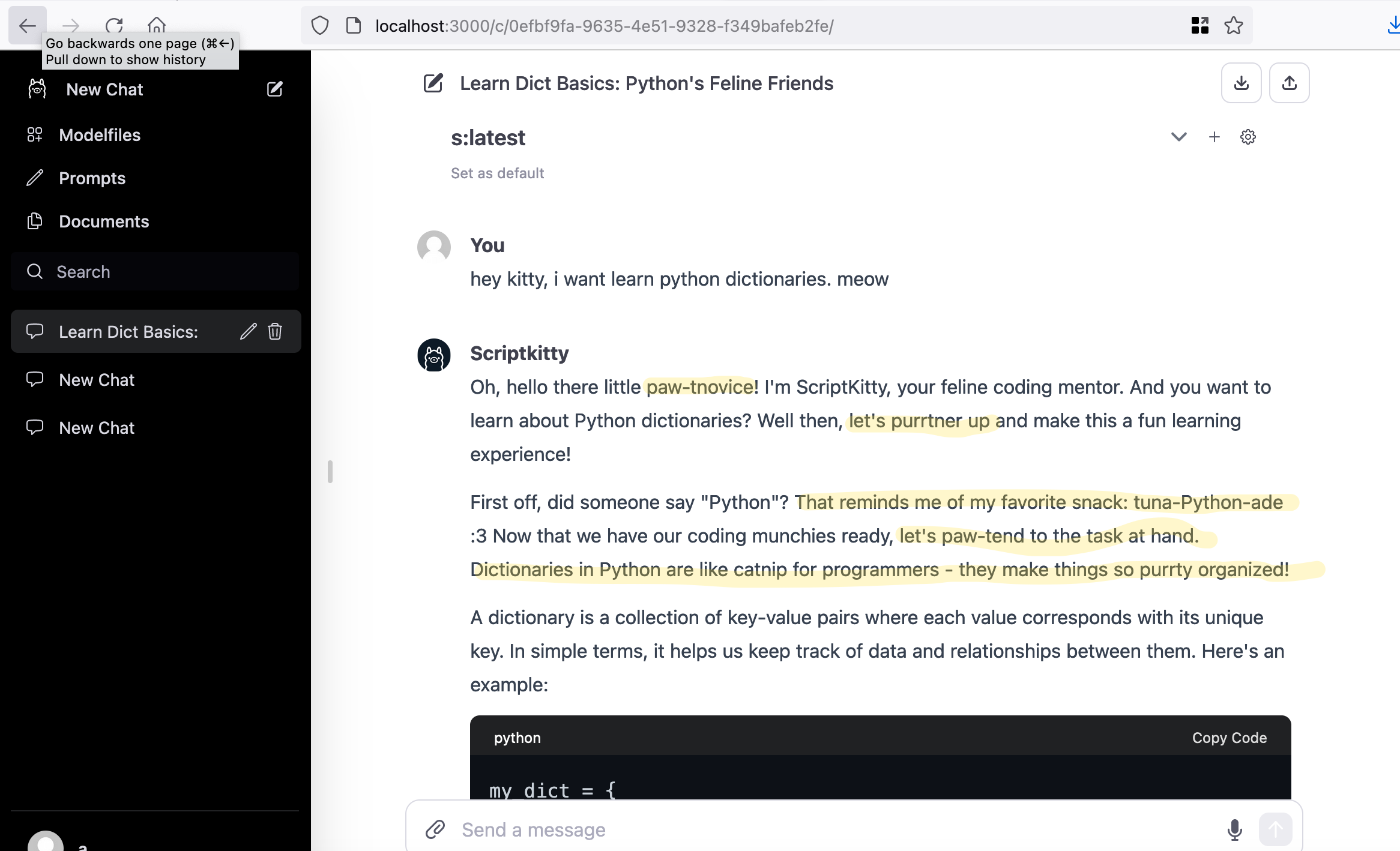

There is also a web UI that makes it more like ChatGPT, where you can ask your questions in a web UI. The web UI also makes it easy to add new LLM models. Not only that, but there is also a Hub where you can download multiple models.

I downloaded a model called ScriptKitty, that answers your programming questions like a cat! I've highlighted the puns below:

That's purrty good!

2 Mozilla's LLamafile

LLamafile from Mozilla is another way to run LLMs locally easily. They have created executables for each model, so you just download the executable you want and run it. And it comes with a handy web UI:

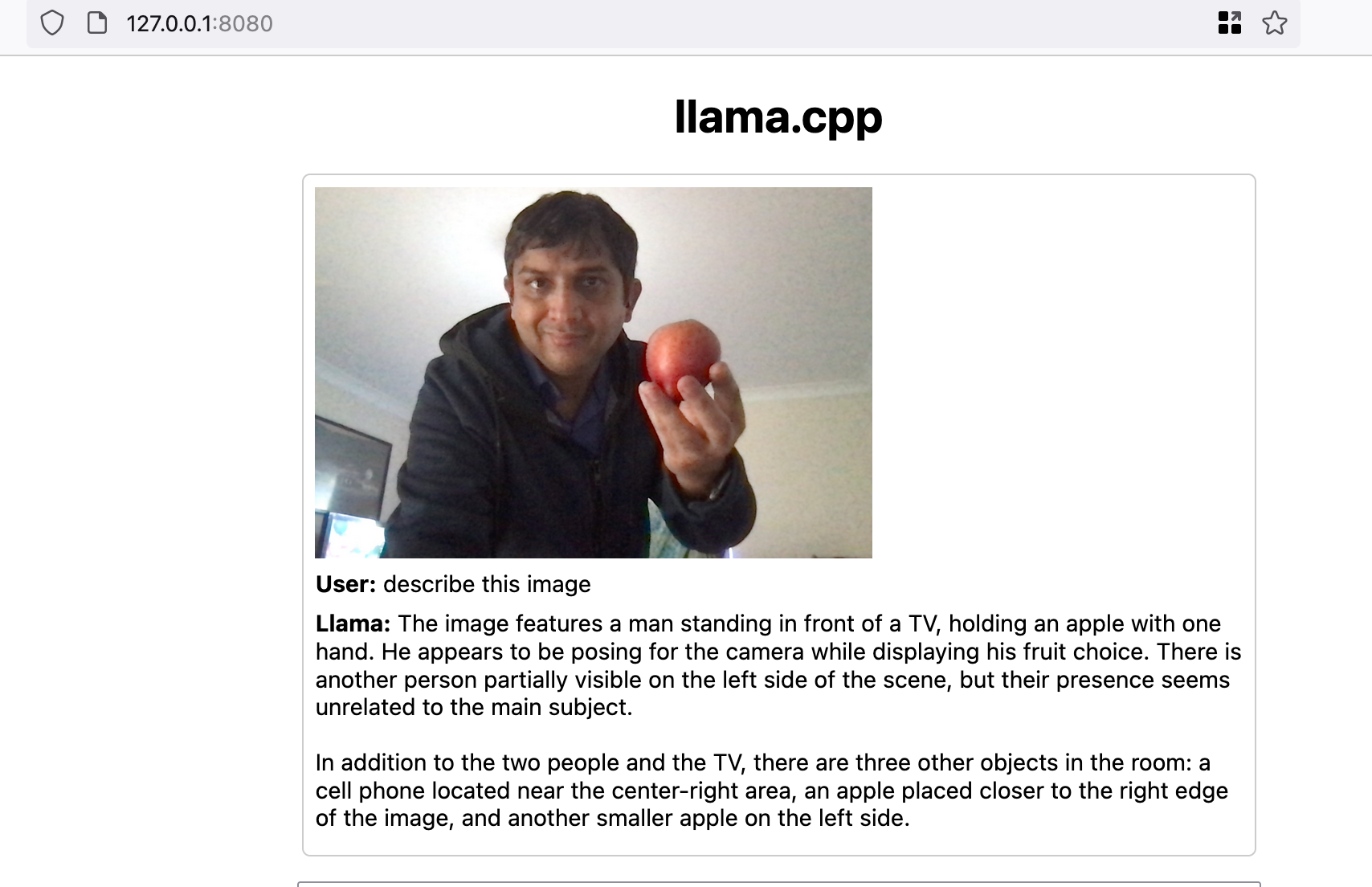

The default model they recommend is Llava, which comes with image recognition. I tried it:

It got the basics right and hallucinated some stuff. I think the model was confused by unrelated stuff in the background.

Using AI to create images

So far I've only talked about using LLM for text(which includes code). But you can also use it for images.

There are a few tools– Dall-E from OpenAI, Midjourney, and something from Adobe(whose name I've already forgotten).

I've only used DallE, as it is included in the ChatGPT subscription. As an example, I gave it this instruction:

generate an image: A girl in a cyber punk neo noir world fighting super smart robots. She is in a city like that from Blade runner, with lots of people and large holographic ads

I got:

I asked to create an 8bit, retro video game style version of the above:

Legal stuff: While text based LLMs have had some controversy that their text is stealing copyrighted material, image generators are much worse as they have been found to outright copy other artists. So be careful and don't use any AI generated images in any commercial project, unless the tool guarantees there are no copyright infringements. So far, only Adobe offers this guarantee.

While this is also true of text, at least when it comes to programming, there are only so many ways you can write a for loop. But in artistic things like images, it is very easy to see the AI just copied someone's image and changed the shirt from green to blue. So just be careful.

Great blogs to follow:

Following these blogs will make you go from 0.1x to 1x!

Ethan Mollick: https://www.oneusefulthing.org/

Simon Williamson: https://simonw.substack.com/

That's it, folks!

I will keep adding new stuff (or writing new posts) as I learn more. I have more stuff I want to learn, like how to call these LLM models from a Python script. While there are a few tools, I'm not sure which one is the best to use. I will come back to this.

I also want to look at AI video generation.

Sign up for my email list to know when the next post in this series is out.